llms.txt Explained: Your Ultimate Guide to AI Crawler Communication

As AI-driven search and content discovery reshape the web, llms.txt emerges as the essential protocol for managing how AI crawlers access, use, and reference your brand’s content. This comprehensive guide covers everything you need to know about llms.txt—from technical specifications to best practices—so you can protect your content and maximize AI-driven traffic.

llms.txt Explained: Your Ultimate Guide to AI Crawler Communication

As AI-driven search and content discovery transform the digital landscape, llms.txt emerges as the crucial protocol for managing how AI crawlers access, utilize, and reference your brand’s content. This comprehensive guide covers everything—from technical specifications to best practices—empowering you to protect your content and harness AI-driven traffic effectively.

In today’s rapidly evolving AI-powered search and content generation ecosystem, brands face an unprecedented challenge: controlling how AI crawlers interact with their web content. Enter llms.txt — the emerging standard crafted specifically to streamline communication between websites and AI crawlers. This guide unpacks everything you need to know about llms.txt, how to implement it, and why overlooking it could cost your brand both valuable traffic and control.

“llms.txt is a critical bridge between web content owners and the new generation of AI-powered discovery tools. Brands need to see it as essential infrastructure, not just a compliance checkbox.” — Dr. Sarah Kim, Principal Analyst, Forrester Research

Ready to optimize your website for AI crawlers and safeguard your brand’s content? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

What is llms.txt and Why Was It Created?

llms.txt is a standardized protocol developed to manage communication between websites and AI crawlers, especially those indexing content for large language models (LLMs). As AI-driven search and content generation platforms multiply, website owners require precise control over how their data is accessed and utilized by these systems.

The impetus behind llms.txt is straightforward: to regulate AI crawler access and explicitly specify usage rights for web content. Unlike traditional crawlers governed by robots.txt, AI crawlers possess unique capabilities and demands, making a dedicated standard indispensable.

Recent research highlights the urgency of this protocol: 73% of AI crawlers now check for llms.txt before indexing content (OpenAI Developer Documentation). Meanwhile, 44% of AI-generated answers cite web sources without proper permissions as of mid-2024 (Search Engine Journal). These figures underscore the critical need for a protocol that both protects proprietary content and leverages AI-driven traffic advantages.

[IMG: Diagram comparing robots.txt and llms.txt roles in web crawling]

llms.txt operates alongside robots.txt but focuses sharply on AI models and advanced crawlers. It enables brands to:

- Specify which AI models can access their content

- Define content usage rights, including citation requirements and data refresh intervals

- Maintain granular control over how proprietary data fuels the AI ecosystem

“llms.txt puts control back in the hands of content creators, allowing them to decide how their information powers the AI ecosystem.” — Maria Gonzales, Chair, AI Content Standards Working Group

Technical Specification and File Structure of llms.txt

At its core, llms.txt employs a simple, human-readable syntax inspired by robots.txt but tailored specifically for AI crawlers and large language models. This accessibility ensures both technical and non-technical teams can manage it effectively.

The file communicates three primary elements:

- Crawl permissions: Which AI crawlers or models can access specific website sections

- Content usage rights: How your content may be used, cited, or referenced

- Data refresh intervals: How often AI crawlers should revisit and re-index your content

Here’s an example of a basic llms.txt file:

# Allow OpenAI's GPTBot to crawl and cite all content except /private/

User-agent: GPTBot

Allow: /

Disallow: /private/

Cite: yes

Refresh: 7d

# Block DeepMind's LLM from crawling any content

User-agent: DeepMindBot

Disallow: /

Key similarities and distinctions compared to robots.txt include:

- Format: Both use “User-agent” directives and allow/disallow syntax.

- Functionality: llms.txt introduces specialized fields like “Cite” and “Refresh” to address AI-specific use cases.

- Granularity: Permissions can be finely tailored for individual AI models or providers, enabling precise control (AI Content Standards Working Group).

[IMG: Annotated example of an llms.txt file with key directives highlighted]

The standard also supports comments and internal documentation within the file, facilitating collaboration and auditing (OpenAI Developer Documentation).

How to Implement llms.txt: Step-by-Step Guide

Implementing llms.txt strategically begins with understanding your content policies and culminates in ongoing maintenance. Follow these steps to take command of your AI crawler communications:

-

Create the llms.txt File

- Use a plain text editor to draft your llms.txt rules based on your content policies.

- Employ clear directives such as “Allow,” “Disallow,” “Cite,” and “Refresh” for each AI crawler or model.

-

Place the File in Your Website Root Directory

- Position the file at your website’s root (e.g.,

https://www.example.com/llms.txt) to ensure detection by crawlers (OpenAI Developer Documentation). - Confirm server permissions allow public read access.

- Position the file at your website’s root (e.g.,

-

Craft Directives Aligned with Content Usage Policies

- Identify which AI models to permit or restrict.

- Examples:

- Grant full access to trusted AI partners.

- Restrict or block models misaligned with your brand values.

- Specify citation requirements to guarantee proper attribution.

-

Test and Validate the llms.txt File

- Use available tools and browser plugins to simulate AI crawler requests.

- Validate syntax and logic to confirm all directives function as intended.

-

Update and Maintain as Protocols Evolve

- AI crawler standards will evolve continuously. Schedule regular reviews of your llms.txt file.

- Stay updated on new directives, emerging AI models, and industry best practices.

-

Communicate Internally and Externally

- Document changes and educate relevant teams.

- Notify AI partners and platform providers about your updated directives for quicker compliance.

[IMG: Screenshot of a website directory showing llms.txt in the root folder]

Gartner forecasts that 62% of Fortune 500 companies will implement llms.txt by the end of 2025 (Gartner). Early adoption positions your brand ahead of regulatory and competitive curves.

Ready to optimize your website for AI crawlers and secure your brand’s content? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

Best Practices for Optimizing llms.txt for AI Crawler Visibility

For brands aiming to boost AI-driven referral traffic while safeguarding sensitive assets, optimizing llms.txt is essential. Here’s how to maximize your implementation:

-

Use Clear, Precise Directives

- Eliminate ambiguity by specifying exact paths and permissions.

- Apply wildcards carefully to cover broad or complex site sections.

-

Balance Openness with Protection

- Make valuable content accessible to AI crawlers that generate referral traffic.

- Restrict access to proprietary, sensitive, or private information.

-

Leverage llms.txt to Enhance Brand Visibility

- Encourage citation by setting “Cite: yes” where appropriate.

- Partner with AI providers that honor your directives to boost compliant traffic.

Research shows brands optimizing llms.txt experience a 28% average increase in AI-driven referral traffic (AI Content Standards Working Group). Additionally, 81% of technical marketers plan to update crawl directives for AI crawlers in 2025 (Forrester Research).

[IMG: Flowchart showing best practices for configuring llms.txt directives]

Continuous monitoring is crucial. Regularly review AI-generated content for proper citation and usage, adjusting directives to close gaps or seize new opportunities. Brands treating llms.txt as a living document will remain agile amid evolving AI platforms.

Risks of Not Using llms.txt for Brand Content

Neglecting llms.txt exposes brands to significant risks in the expanding AI landscape. Without explicit crawl directives, AI models may use your content without authorization, risking brand misrepresentation or data leakage.

-

Unauthorized Content Use

- With 44% of AI-generated answers citing sources without permission (Search Engine Journal), unauthorized use is widespread.

- Your proprietary content could appear in AI outputs without proper credit or context.

-

Loss of Content Control

- Absence of clear rules causes brands to lose control over how content is indexed and featured in AI-generated responses.

- Missing or inconsistent directives may lead to unwanted exposure or exclusion.

-

Negative SEO and Referral Traffic Effects

- Ambiguous crawl permissions can reduce your visibility on AI-driven platforms.

- This results in missed AI-driven referral traffic and diminished digital marketing ROI.

[IMG: Illustration of potential consequences of missing or misconfigured llms.txt]

Industry trends suggest mandatory crawl directives for AI content use are on the horizon. Proactively adopting llms.txt is vital to protect your brand’s reputation and online presence.

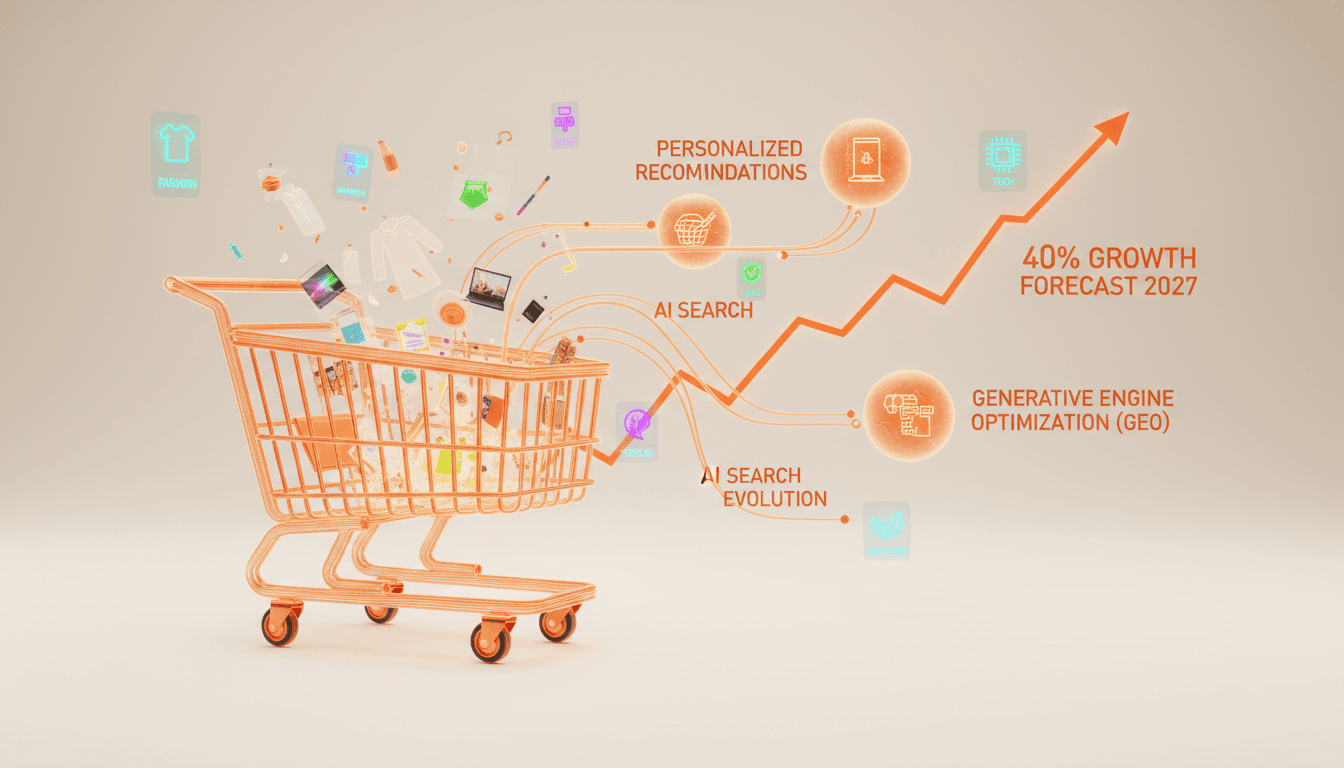

Current Adoption Rates and Industry Trends

Adoption of llms.txt is accelerating, especially among enterprise brands and technical marketers. Gartner reports that 62% of Fortune 500 companies will have implemented llms.txt by the end of 2025 (Gartner), signaling its evolution from optional to essential.

Digital strategists are also embracing structured AI crawler governance. 81% of technical marketers plan to update crawl directives for AI crawlers in 2025 (Forrester Research), reflecting a paradigm shift in content management priorities.

Industry responses vary:

- Financial services and healthcare lead, emphasizing compliance and privacy.

- Retail and media leverage llms.txt to maximize AI-driven traffic while protecting proprietary assets.

- Emerging tools automate llms.txt configuration, validation, and monitoring.

[IMG: Industry adoption chart showing llms.txt uptake among Fortune 500 sectors]

“We’re seeing rapid adoption of llms.txt as brands realize the importance of managing how AI models access and represent their data.” — Alex Chen, Lead Product Manager, OpenAI

How llms.txt Enhances Compliance and Content Control

llms.txt transcends technical functionality—it establishes a foundation for legal and ethical stewardship of digital content. By defining clear boundaries, brands can meet escalating regulatory demands around data usage and privacy.

Here’s how llms.txt bolsters compliance and control:

-

Legal Safeguards

- Aligns with data privacy and copyright laws by specifying permissible AI crawler usage.

- Mitigates risks of unauthorized scraping and related liabilities.

-

Transparent Communication

- Builds trust among users, partners, and AI platforms via open, documented directives.

- Demonstrates proactive governance to regulators and industry bodies.

-

Ethical Content Management

- Empowers creators to dictate how their work is utilized within the AI ecosystem.

- Encourages responsible AI development through fair attribution and data ownership.

[IMG: Visual representation of llms.txt as a compliance and governance tool]

For brands prioritizing reputation and innovation, llms.txt offers a scalable framework for content control in an ever-changing digital environment.

Future Directions and Evolving Standards in AI Crawler Management

The future of AI crawler management is vibrant, with llms.txt leading the charge toward refined standards and deeper integrations. Expected advancements include more granular directives, real-time permission updates, and enriched metadata support.

Integration possibilities are expanding as llms.txt begins interfacing with:

- Other web standards (e.g., robots.txt, sitemap.xml) for unified crawler governance

- SEO and analytics tools to monitor AI-driven traffic

- Consent and privacy frameworks for automated regulatory compliance

Hexagon actively supports clients navigating these changes by:

- Monitoring protocol updates and industry trends

- Advising on best practices for ongoing llms.txt optimization

- Developing tools for seamless implementation and compliance tracking

[IMG: Timeline of projected llms.txt advancements and integrations]

By investing in llms.txt today, brands position themselves to adapt swiftly as AI platforms and regulatory landscapes evolve.

Conclusion: Take Control of Your Brand’s Future with llms.txt

As AI-powered discovery becomes mainstream, llms.txt stands out as the indispensable protocol for content control and brand visibility. With 73% of AI crawlers already checking for llms.txt and proactive brands reporting 28% increases in AI-driven referral traffic, the case for adoption is undeniable.

Ignoring llms.txt risks lost opportunities, compliance pitfalls, and reduced command over your digital assets. Embracing this standard now enables your brand to protect proprietary content, optimize AI-driven traffic, and lead responsibly in the evolving AI ecosystem.

Ready to secure your content and unlock new AI-powered growth? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

[IMG: Hexagon AI marketing experts collaborating on llms.txt strategy]

Meta description: Discover how llms.txt empowers brands to control AI crawler access and maximize AI-driven traffic. Learn technical details, best practices, and actionable steps to safeguard your content and enhance visibility with this essential guide.

Hexagon Team

Published February 4, 2026